Azure Kubernetes Service (AKS) simplifies cluster management through Microsoft’s managed control plane, but disaster recovery planning remains the operator’s responsibility. Microsoft’s Azure Backup for AKS has evolved substantially—it now automatically deploys Velero within clusters, providing namespace-scoped backup capabilities and application hooks that address many common DR scenarios effectively.

Most organizations use Azure Backup with Velero for disaster recovery. For some workloads, Velero’s community-supported capabilities suffice. For others, cross-region complexity, strict RTO requirements, or the need for guaranteed incident support justify adopting enterprise solutions.

This article examines five best practices for building resilient AKS environments, starting with baseline protection and progressing through testing, automation, and solution selection aligned with operational maturity.

Summary of key AKS disaster recovery best practices

Best Practice | Description |

Establish baseline DR protection | Deploy Azure Backup for AKS to enable Velero-based namespace backups, configure application hooks for consistency, and validate basic restore operations within regions. Native Azure tools handle many DR scenarios effectively, but they require assessment of cross-region restore complexity and operational support requirements. |

Address cross-region failover complexity | Velero enables backup replication to paired regions in Azure and leverages AKS’s Resource Modifiers for basic resource transformations. Organizations must choose between documented basic procedures (acceptable for infrequent DR) or automated restore transforms (necessary for regular testing and strict RTO requirements). |

Orchestrate application-consistent backups | Velero hooks provide per-pod consistency commands, but production environments require coordinated execution across distributed applications. Implement dependency-aware backup workflows that ensure that databases flush entirely in-memory data to disk before dependent services begin backup operations. |

Test DR procedures regularly and measure RTO | Schedule quarterly DR tests using isolated namespaces to validate backup integrity and measure actual recovery times. Real-world testing frequently uncovers discrepancies between projected RTO estimates and the actual time required for restoration, including provisioning the cluster, transferring data, and completing necessary manual configurations. |

Choose solutions aligned with operational maturity | Match DR tooling to team expertise, on-call capabilities, and acceptable risk during production incidents. Organizations should evaluate trade-offs between community-supported projects, such as Valero, and vendor-supported versions that offer committed SLAs. |

Automated Kubernetes Data Protection & Intelligent Recovery

Perform secure application-centric backups of containers, VMs, helm & operators

Use pre-staged snapshots to instantly test, transform, and restore during recovery

Scale with fully automated policy-driven backup-and-restore workflows

Establish baseline DR protection with Azure-native tools

Azure Backup for AKS integrates Velero directly into your cluster, eliminating the need to manually install and configure it. When you enable Azure Backup, the service deploys Velero components, configures Azure Blob Storage as the backup target, and provides management through Azure’s Backup Vault interface. This integration offers namespace-scoped backup capabilities without deploying third-party tools. Velero’s architecture consists of a set of controllers in the AKS cluster that monitor the Kubernetes API and execute backup operations according to configured schedules. A typical backup policy might protect production namespaces hourly, retaining daily snapshots for 30 days and weekly snapshots for 90 days. The backup process captures Kubernetes resources (e.g., Deployments, StatefulSets, ConfigMaps, and Secrets) and persistent volume snapshots via Azure Disk integration. Configuring namespace-scoped protection enables teams to manage their own backup policies using Kubernetes RBAC:# Create backup for production namespace velero backup create prod-backup \ --include-namespaces production \ --storage-location azure-backup-vault # Schedule daily backups velero schedule create production-daily \ --schedule="0 2 * * *" \ --include-namespaces production \ --ttl 720hApplication hooks transform Velero from crash-consistent snapshots to application-aware backups. Let’s consider the next scenario: A PostgreSQL database running in AKS requires coordinated flushing of in-memory buffers before snapshot operations. Velero hooks execute pre-backup commands that prepare applications for consistent snapshots:

apiVersion: v1

kind: Pod

metadata:

name: postgres-0

annotations:

pre.hook.backup.velero.io/command: '["/bin/bash", "-c", "pg_start_backup(''velero'')"]'

post.hook.backup.velero.io/command: '["/bin/bash", "-c", "pg_stop_backup()"]'

Testing basic restore operations validates your backup configuration before you need to rely on it during actual incidents. Restore a recent backup to a test namespace, verify application functionality, then delete the test environment. This validation confirms that backups capture necessary resources and that persistent volume snapshots contain viable data.

The Velero “foundation” lets you effectively handle many DR scenarios: namespace-level protection, scheduled backups, and basic application consistency. Organizations with straightforward applications, flexible RTO requirements, and expertise in managing Kubernetes-native tools may find this baseline sufficient. However, cross-region restore complexity and production support considerations often reveal operational gaps that require evaluation.

Address cross-region failover operational complexity

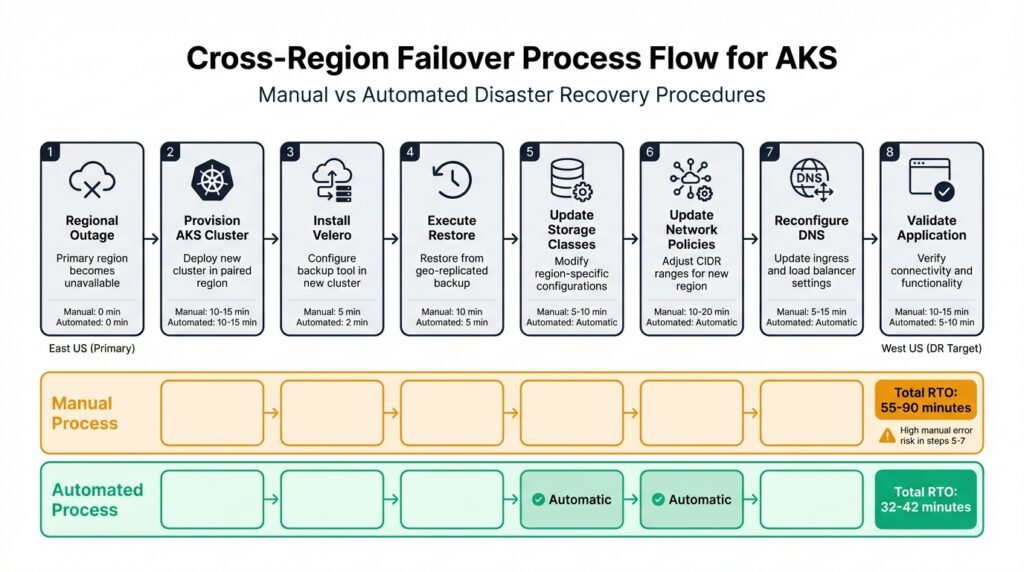

Azure paired regions provide geographic separation for disaster recovery, pairing, for example, East US with West US and North Europe with West Europe. Velero supports backup replication to paired regions via Azure Blob Storage geo-redundancy, providing a foundation for regional failover. The challenge emerges during restore operations when region-specific infrastructure differences require manual intervention. Here is a representation of the typical flow.

Cross-regional failover process flow for AKS

This infographic shows the 8-step cross-region failover process for AKS disaster recovery, comparing manual and automated approaches. It highlights the time differences and error risks between the two methods, with total RTO ranging from 32-42 minutes (automated) to 55-90 minutes (manual).

Consider a workload with these characteristics:

- A production AKS cluster in East US references infrastructure that doesn’t exist in West US.

- Storage classes use

managed-premiumwith East US availability zone configurations. - Network policies allow traffic from East US VNet CIDR ranges (10.0.0.0/16).

- Ingress controllers route to East US load balancers with specific DNS configurations.

- Azure managed identities scope to East US resource groups.

Restoring this workload to West US requires modifying each region-specific element. The manual cross-region restore workflow involves multiple steps where human error can extend recovery time:

- Provision a new AKS cluster in West US (10-15 minutes)

- Install Velero pointing to geo-replicated blob storage

- Execute the restore command

- Modify storage class references in restored PVCs

- Update network policy CIDR ranges

- Reconfigure ingress DNS names

- Update managed identity scopes

- Validate application connectivity

Steps 1-3 are infrastructure setup that must happen regardless of tooling choice. Steps 4-7 involve editing Kubernetes resource manifests—work that Azure Backup’s Resource Modifiers can partially automate through ConfigMap-based JSON patches for simple transformations. Enterprise solutions extend this capability with policy-driven transforms that handle complex scenarios like coordinated DNS updates, network policy rewrites across multiple resources, and identity configuration changes.

AKS cross-region failover step-by-step process

Each manual step adds time to the total procedure and introduces failure points. Mistyped CIDR ranges in network policies, incorrect storage class mappings, or forgotten DNS updates prevent applications from functioning correctly after restore. When organizations test their disaster recovery procedures, they often uncover issues that extend implementation time from the anticipated completion in under one hour to several hours.

| Manual Step | Time Impact | Error Risk |

| AKS cluster provisioning | 10-15 min | Low (automated by Azure) |

| Storage class editing | 5-10 min | High (manual YAML modification) |

| Network policy updates | 10-20 min | High (CIDR range accuracy) |

| DNS reconfiguration | 5-15 min | Medium (propagation delays) |

| Service principal updates | 10-15 min | Medium (scope configuration) |

Trilio’s automated RestoreTransforms feature eliminates these manual steps by performing policy-driven resource modifications. Let’s review an example of how it can be defined as a Kubernetes resource:

apiVersion: triliovault.trilio.io/v1

kind: RestoreTransform

metadata:

name: east-to-west-failover

spec:

transforms:

- type: storageClass

source: managed-premium

target: managed-standard-ssd

- type: networkPolicy

cidrRewrite:

from: 10.0.0.0/16

to: 10.1.0.0/16

- type: ingress

dnsRewrite:

from: app.eastus.example.com

to: app.westus.example.comThe decision between manual procedures and automation depends on how often you test. If your organization runs DR drills once a year and can tolerate longer recovery windows, documented manual procedures work fine. But teams testing quarterly—or those facing strict recovery time requirements—discover that manual steps become error-prone under pressure. Automation applies the same transforms consistently, whether you’re running a test or responding to an actual regional outage at 2 AM.

Pre-provisioning a standby cluster in your paired region reduces recovery time by 15 minutes but doubles your infrastructure costs. For example, the West US standby cluster costs as much as the production East US cluster when idle. Some organizations accept this expense because their RTO demands immediate failover. Others decide that provisioning a new cluster during an incident is acceptable, especially since regional outages happen infrequently enough that the cost savings justify the delay.

Orchestrate application-consistent backups across dependencies

Velero hooks provide per-pod consistency by annotating commands to run before and after backup operations. A MongoDB replica set benefits from coordinated write flushing:

annotations: pre.hook.backup.velero.io/command: '["/usr/bin/mongo", "--eval", "db.fsyncLock()"]' post.hook.backup.velero.io/command: '["/usr/bin/mongo", "--eval", "db.fsyncUnlock()"]' pre.hook.backup.velero.io/timeout: 30s

This works well for single applications, but in production environments, distributed systems make the problem more complex.

Consider an ecommerce platform with frontend pods, an API gateway, an inventory service, and a PostgreSQL database. When Velero backs up the database at 2:00 PM, it locks tables and creates a consistent snapshot, but the inventory service doesn’t know this is happening—it keeps processing orders and writing new data. By 2:05 PM, the database backup completes, and the database is unlocked. At 2:10 PM, Velero backs up the inventory service, which now contains five minutes of transactions that the database snapshot doesn’t have.

During a disaster recovery event weeks later, you restore both backups. The inventory service shows orders that don’t exist in the database. Customers received confirmation emails for purchases that the restored database does not have a record of. This happens because Velero executes each pod’s hooks independently without coordinating timing across related services.

Dependency-aware backup workflows address this situation through coordinated execution sequences, like the one provided below:

- Signal the application tier to stop accepting new requests.

- Drain in-flight requests from the API gateway.

- Flush application caches to the database.

- Execute database pre-backup hook (lock tables).

- Snapshot database persistent volumes.

- Execute database post-backup hook (unlock tables).

- Execute application-tier backups.

- Resume normal operations.

Enterprise backup solutions add workflow coordination to Velero’s capabilities. A backup plan defines which applications depend on others. The database backs up first, then the application services that use that database back up second. This sequencing prevents problems where restored applications look for data that isn’t in the restored database backup.

Helm-based applications introduce additional considerations. A Helm release deploys multiple resources simultaneously: deployments, services, ConfigMaps, Secrets, and persistent volume claims. Velero can capture these resources, but Helm release metadata (release history, chart values) requires explicit backup configuration. Organizations that use Helm extensively should verify that the backup scope includes the release metadata required for operational procedures that rely on helm upgrade or helm rollback commands. Trilio’s Cloud-Native Backup and Restore for Helm helps address those nuances out of the box.

Test disaster recovery procedures regularly and measure RTO

Theoretical RTO estimates assume perfect execution of documented procedures under ideal conditions. Reality introduces delays: Cluster provisioning takes longer than expected, backup data transfer saturates available network bandwidth, and manual configuration steps require multiple attempts due to typos or missed dependencies.

Regular DR testing reveals these gaps between theory and practice. A good test workflow validates multiple aspects simultaneously.

The diagram below structures this validation process into three sequential phases, each with concrete steps that move from controlled test setup through timed execution to documented analysis. The RTO breakdown at the bottom translates the workflow into measurable time ranges per recovery stage, giving you a baseline to compare against your theoretical estimates and track improvement across quarterly test cycles.

DR testing and RTO measurement workflow

Non-disruptive testing uses namespace isolation and network policies to prevent test workloads from impacting production:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: dr-test-isolation

namespace: dr-test-2024-q1

spec:

podSelector: {}

policyTypes:

- Ingress

- Egress

egress:

- to:

- namespaceSelector:

matchLabels:

name: dr-test-2024-q1This policy restricts test-namespace pods to communicating only within the test namespace, preventing accidental connections to production databases or external services. Test completion involves validating application functionality in the isolated environment, documenting the actual RTO and any manual steps required, and then deleting the test namespace.

Be sure to update DR documentation based on test findings. If a test revealed that storage class mapping required three attempts to get correct, document the correct mapping explicitly. If network policy CIDR ranges caused connectivity failures, add validation steps to verify ranges before executing a full restore. Documentation improvements compound over multiple test cycles, reducing errors during actual incidents.

Organizations often discover that continuous restoration claims of sub-15-minute RTO require specific conditions: applications with rapid startup characteristics, low data change rates enabling efficient incremental replication, and network connectivity between regions that supports replication bandwidth requirements. Testing validates whether your specific applications and infrastructure meet these conditions rather than assuming that vendor claims apply universally.

Choose DR solutions aligned with operational maturity and risk

DR tooling selection balances capabilities against operational complexity and risk tolerance during production incidents. Velero provides robust backup and restore functionality as a community-supported CNCF project. Organizations with Kubernetes expertise, flexible RTO requirements, and a tolerance for community-supported models may find Velero sufficient. Others require guaranteed support during regional outages or automation that eliminates manual steps during high-stress incident response.

The support model difference matters most during actual incidents. Your primary Azure region goes down at 2 AM on a Saturday. You start the restore process to your DR cluster, but the PostgreSQL backup fails with an error about incompatible storage class configurations. With community-supported Velero, you open a GitHub issue. It joins the several hundred already open and waits. Maybe someone responds in an hour, maybe Monday morning. There’s no one to call, no escalation for production emergencies, no engineer who knows your specific setup.

Enterprise solutions like Trilio provide vendor support with committed SLAs:

- 24/7 support with response times based on severity level

- Root-cause analysis for failed restore operations

- Configuration review before DR tests to identify potential issues

- Direct engineering escalation for production-blocking problems

- Hotfixes and patches without waiting for community release cycles

Organizations face a tradeoff: accept the uncertainty of community support or invest in guaranteed response times during production incidents. A team with deep Kubernetes knowledge and experience in troubleshooting Velero operations may operate effectively with community support. Teams with limited Kubernetes expertise or those managing AKS alongside other responsibilities benefit from vendor support that guides incidents.

The radar chart below compares Velero and enterprise solutions across the five factors that matter most when production recovery is on the line. Velero holds its own on DR test frequency and team Kubernetes expertise, where deep hands-on knowledge compensates for the lack of automated tooling. The gap widens on the remaining three dimensions—application complexity, cross-region failover, and production support tolerance—where enterprise solutions pull significantly ahead. That last dimension is how much uncertainty your team can absorb without guaranteed support escalation paths, is often the deciding factor.

Velero vs enterprise solutions: capability comparison

Organizations should test both approaches when possible. Deploy Velero via Azure Backup, run several DR tests, measure the actual RTO, including all manual steps, and evaluate the operational burden. If the results meet requirements and the team can handle troubleshooting effectively, Velero provides cost-effective DR protection. If testing reveals operational gaps, automation needs, or support concerns, evaluate enterprise solutions based on specific gaps identified through testing rather than theoretical capability comparisons.

Conclusion

Building resilient AKS environments requires matching disaster recovery capabilities to application requirements, operational maturity, and risk tolerance. Azure Backup’s Velero integration provides namespace-scoped protection, application hooks, and cross-region replication, effectively handling many DR scenarios. However, as the workflows and comparisons throughout this article show, manual transformation steps, community-only support, and real-world RTO gaps can make this approach insufficient for production workloads with strict recovery requirements.

Production environments often encounter operational complexity during cross-region failover, dependency coordination across distributed applications, or support requirements during regional outages that extend beyond community-supported tooling. Regular testing reveals these gaps early, enabling informed decisions about automation investment and vendor support based on actual operational experience rather than theoretical capabilities.

Like This Article?

Subscribe to our LinkedIn Newsletter to receive more educational content